How to Choose the Right AI Model for Your Business: The Garage Guide

You've probably heard your CTO (or that AI-enthusiastic person on your team) say something like "we use GPT-4" or "we switched to Claude." You nodded. You moved on. But did you actually understand what that choice means for your budget, your product, and your competitive edge?

Most business leaders don't. And that's costing them money.

Here's the truth no AI vendor will tell you: the most expensive AI model is almost never the right choice for every task in your business. Picking an AI model is not about getting the "best" one. It's about matching the right tool to the job.

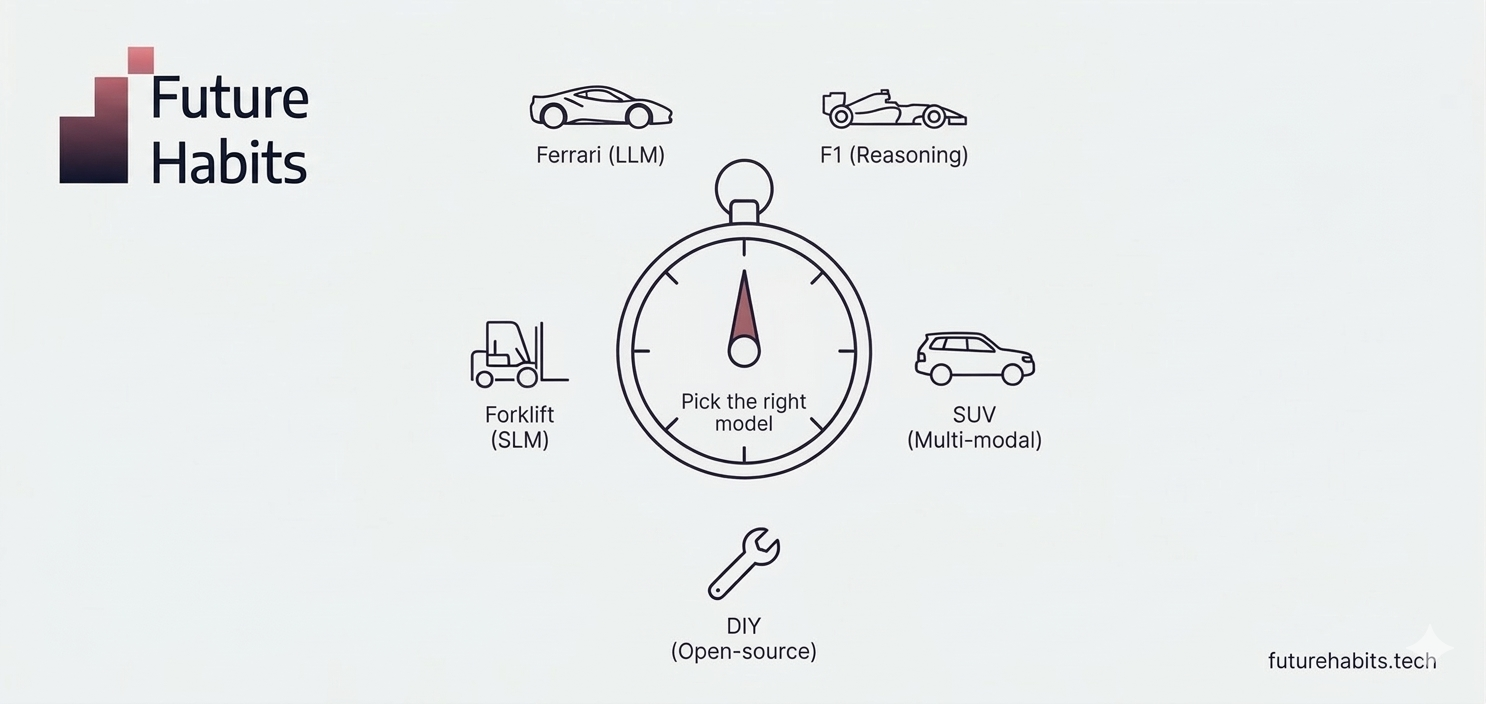

Think of it like vehicles. You wouldn't use a Ferrari to move pallets in a warehouse. You'd use a forklift. The forklift does the job better, cheaper, and without complaining about the load.

This guide breaks down every type of AI model in plain language, tells you when to use each one, and helps you stop overpaying for horsepower you don't need.

What is an AI model, actually?

An AI model is a computer program trained to take input and generate a response. You give it a prompt (a question, instruction, or piece of data). It processes it. It produces output.

That's it. No magic. No sentience. Just software that learned patterns from massive amounts of data.

The key insight for business leaders: different models were trained differently, on different data, for different purposes. Which means they have different strengths, different costs, and different limitations.

Choosing the wrong model is like hiring a senior strategy consultant to do data entry. Technically possible. Wildly expensive. Not the smartest use of resources.

The garage: five types of AI models you need to know

Here's a framework I use with every founder and innovation team I work with. I call it "the garage" because every business needs a fleet, not just one vehicle.

Your Ferrari: Large Language Models (LLMs)

What they are: The flagship models from the major AI companies. GPT-4 and GPT-4o from OpenAI. Claude Opus from Anthropic. Gemini Pro from Google. These are the models with hundreds of billions of parameters, trained on massive datasets, capable of complex reasoning across virtually any domain.

What they're good at: Long-form content creation. Complex document analysis (contracts, research papers, strategy documents). Multi-step reasoning. Creative brainstorming. Code generation and review. Translation with nuance. Tasks where quality matters more than cost.

What they cost: Premium pricing. Depending on the provider and usage volume, you're looking at $15-75 per million input tokens for the top-tier models. That adds up fast when you're processing thousands of requests per day.

When to use them: When the task demands nuance, creativity, or complex reasoning that cheaper models genuinely can't handle. An 80-page contract review? Ferrari. A nuanced investor update? Ferrari. A complex data analysis with multi-step logic? Ferrari.

When NOT to use them: For any task that's simple, repetitive, or well-defined. Classifying support tickets. Extracting structured data from invoices. Routing emails. Summarizing meeting notes. These tasks don't need a Ferrari.

Your forklift: Small Language Models (SLMs)

What they are: Compact, efficient models with fewer parameters (typically under 14 billion). Microsoft's Phi family. Google's Gemma. Mistral Small. These models are trained on curated, high-quality data and optimized for speed and cost-efficiency.

What they're good at: Classification tasks (spam detection, sentiment analysis, ticket routing). Data extraction from structured documents. Summarization of straightforward content. Repetitive, well-defined tasks at scale.

What they cost: A fraction of LLM pricing. Often 10-50x cheaper per request. Some can run on a single GPU or even a laptop, eliminating API costs entirely.

When to use them: When the task is clear, repetitive, and high-volume. Customer support ticket routing? Forklift. Email classification? Forklift. Invoice data extraction? Forklift. In most businesses, 70-80% of AI tasks fall into forklift territory.

The real numbers: A business processing 100,000 customer support interactions per month might spend $7,500-15,000 running them through an LLM. The same volume through an SLM? $150-800. That's not a rounding error. That's the difference between a sustainable AI feature and a budget disaster.

Your SUV: Multi-modal models

What they are: Models that handle multiple types of input and output in a single conversation. Text, images, audio, video. GPT-4o (the "o" stands for "omni"). Claude with vision capabilities. Gemini's native multi-modal models.

What they're good at: Processing images alongside text. Combining voice input with text output. Analyzing video content. Any workflow where different types of data need to come together.

When to use them: When your business process involves more than just text. Processing photos of warehouse inventory? SUV. Analyzing charts from a PDF and writing a summary? SUV. Building a customer support tool where users send photos of damaged products? SUV.

Practical example: A logistics company I worked with was manually reviewing photos of delivery damage. They switched to a multi-modal model that takes the photo, identifies the damage type, estimates severity, and generates a claim summary. What took 15 minutes per case now takes 30 seconds.

Your F1 car: Reasoning models

What they are: Specialized models that take extra time to "think through" complex problems step by step before responding. OpenAI's o3 series. Claude with extended thinking. These models use "chain-of-thought" reasoning, breaking complex problems into smaller steps.

What they're good at: Complex mathematical calculations. Multi-step logical analysis. Financial modeling and scenario planning. Scientific reasoning. Code debugging that requires understanding entire system architectures.

What they cost: The most expensive per-request. They use more compute because they generate internal reasoning steps before producing a final answer. But for the right tasks, the accuracy improvement justifies the cost.

When to use them: When the stakes are high and the problem is genuinely complex. Pricing optimization involving multiple variables? F1 car. Regulatory compliance analysis with edge cases? F1 car.

When NOT to use them: For anything straightforward. Drafting your Monday newsletter does not require a reasoning model. Using a reasoning model for simple tasks is like entering an F1 car in a parking lot. Impressive. Pointless. Expensive.

Your DIY kit car: Open-weight models

What they are: Models where the AI company releases the internal parameters (called "weights") so anyone can download, run, modify, and host them independently. Meta's Llama. Mistral. Alibaba's Qwen. These are free to download and deploy.

What they're good at: Everything the proprietary models can do, with the added benefit of full control. Your data never leaves your servers. No per-request API fees. Full customization through fine-tuning. No vendor lock-in.

When to use them: When data privacy is non-negotiable (GDPR-sensitive data, healthcare records, financial information). When you want to reduce long-term costs at scale. When you need full control over the model's behavior.

The European angle: For EU-based businesses dealing with GDPR, data sovereignty, and the EU AI Act, open-weight models deserve serious consideration. Mistral, built in Paris, is a particularly relevant option. Running an open-weight model on European infrastructure means your data stays in the EU, on your terms.

The decision framework: match the vehicle to the road

Before you commit to any AI model, map your actual tasks.

Step 1: List every AI use case in your business. Be specific. Not "customer support" but "classify incoming support tickets by urgency" and "draft initial response to common questions."

Step 2: Categorize each task.

- Simple, repetitive, and high-volume? Forklift job (SLM).

- Requires nuance, creativity, or complex reasoning? Ferrari job (LLM).

- Involves images, audio, or video alongside text? SUV job (multi-modal).

- Complex, high-stakes calculation or analysis? F1 car job (reasoning model).

- Sensitive data that can't leave your infrastructure? DIY kit car job (open-weight model).

Step 3: Calculate costs for each approach. Most businesses discover that 70-80% of their AI tasks fit the forklift category. Only 10-20% genuinely need the Ferrari.

Step 4: Build a multi-model stack. The smartest companies use a "router" approach: simple queries go to the SLM, complex ones escalate to the LLM, sensitive data stays on the open-weight model.

Common mistakes I see founders make

- Using GPT-4 for everything. Easy to set up, but expensive at scale. I've seen startups burn through their entire API budget in a week.

- Choosing based on benchmarks, not use cases. A model scoring highest on coding benchmarks might be terrible at writing customer emails. Always test with your actual data.

- Ignoring latency. If your customer-facing chatbot takes 8 seconds to respond, customers leave. Smaller, faster models often deliver better user experience.

- Not considering the total cost. API pricing is per-token, both input and output. Factor in token efficiency, not just quality.

- Treating AI model selection as a one-time decision. Models improve. Pricing changes. Revisit your model stack quarterly.

The bottom line

AI model selection is a strategic decision, not a technical one. It directly impacts your unit economics, your user experience, your data privacy posture, and your competitive position.

The companies winning with AI are not the ones using the most advanced models. They're the ones with the clearest understanding of when and how to apply different tools strategically.

Stop asking "what's the best AI model?" Start asking "what's the right AI model for this specific job?"

Match the vehicle to the road, not to your ego.

Need help building your AI model strategy?

At FutureHabits.Tech, I work with founders, innovation teams, and corporate leaders to make smart AI decisions. From model selection to prototype building to full workflow automation, we help you pick the right tools and ship real products.

Whether you're a startup figuring out your first AI feature or an enterprise team optimizing your existing AI stack, we can help.

Book a free consultation | Join an upcoming workshop

Written by Kasia Sadowska, founder of FutureHabits.Tech and Director of Founder Institute Austria.

Last updated: March 2026